How to Deploy ML Models Efficiently and Safely: An Easy Guide

Deploy ML models is no longer just about building models; it’s about deploying them effectively in real-world environments. A high-performing model in development can fail in production without proper deployment strategies.

In this guide, we’ll to ensure your models are scalable, reliable, and production ready.

What is ML Model Deployment?

ML model deployment is the process of integrating a trained machine learning model into a production environment where it can make predictions on real-world data.

This typically involves:

- Packaging the model

- Hosting it on servers or cloud platforms

- Creating APIs for interaction

- Monitoring performance over time

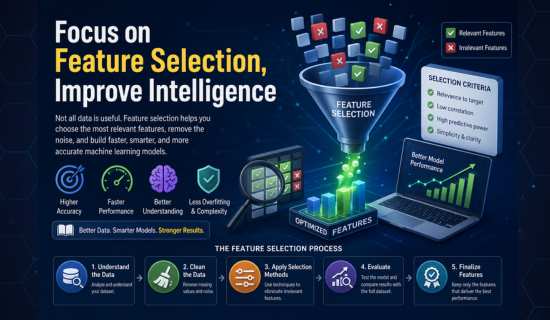

Read More Feature Selection: A Complete Guide for Better AI Models

Why Deployment Matters

A model that isn’t deployed has zero business impact.

Effective deployment ensures:

- Real-time predictions

- Scalability for large datasets

- Integration with applications

- Continuous improvement through feedback loops

1. Choose the Right Deployment Strategy

There are several ways to deploy ML models depending on your use case:

Batch Deployment

- Processes data in chunks

- Ideal for reports and analytics

Real-Time Deployment

- Instant predictions via APIs

- Used in chatbots, fraud detection, recommendations

Edge Deployment

- Runs on local devices (IoT, mobile)

- Reduces latency and dependency on internet

Read More: “Personal Branding Made Easy: Start Building Your Authority Now”

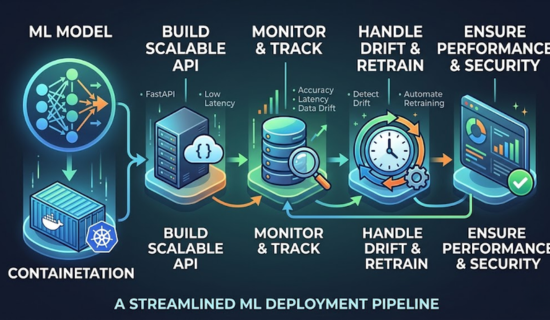

2. Containerization for Portability

Using containers ensures your model runs consistently across environments.

Popular tools:

- Docker

- Kubernetes

Best Practice:

Package your model with all dependencies using Docker, then manage scaling using Kubernetes.

3. Build Scalable APIs

Expose your model through APIs so applications can interact with it.

Frameworks:

- FastAPI

- Flask

Tips:

- Use REST or GraphQL APIs

- Ensure low latency

- Implement caching where possible

4. Version Control for Deploy ML Models

Just like code, ML models must be versioned.

Tools:

- Git

- DVC

Why it matters:

- Track model changes

- Roll back to previous versions

- Ensure reproducibility

5. Monitor Model Performance

After deployment, continuous monitoring is essential.

Track to Deploy ML Models:

- Accuracy

- Latency

- Data drift

- Model drift

Tools to Deploy ML Models:

- Prometheus

- Grafana

Read More: You Need to Know Smart Text Summarization Secrets Fast

6. Handle Data Drift & Model Retraining

Real-world data changes over time, which affects model accuracy.

Best Practices:

- Detect drift automatically

- Retrain models periodically

- Use pipelines for automation

Tools:

- Apache Airflow

- Kubeflow

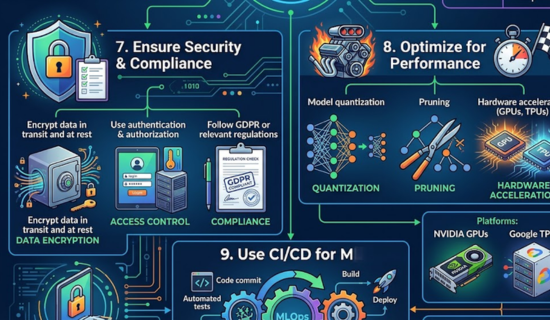

7. Ensure Security & Compliance

ML systems often handle sensitive data.

Key measures:

- Encrypt data in transit and at rest

- Use authentication & authorization

- Follow GDPR or relevant regulations

8. Optimize for Performance

A slow model can ruin user experience.

Optimization techniques:

- Model quantization

- Pruning

- Hardware acceleration (GPUs, TPUs)

Platforms:

- NVIDIA GPUs

- Google TPUs

9. Use CI/CD for ML Pipelines

Automate deployment using CI/CD pipelines.

Tools:

- Jenkins

- GitHub Actions

Benefits:

- Faster updates

- Reduced human error

- Continuous integration of improvements

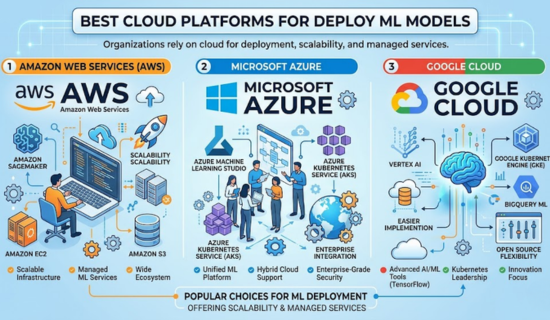

10. Cloud vs On-Premise Deployment

Cloud Platforms

- Amazon Web Services

- Microsoft Azure

- Google Cloud

Advantages:

- Scalability

- Managed services

- Easy integration

On-Premise

- Better control

- Suitable for sensitive data

Common Challenges in ML Deployment

- Model drift

- Infrastructure complexity

- Latency issues

- Lack of monitoring

- Data inconsistency

Pro Tips for Successful Deployment

- Start simple, then scale

- Use microservices architecture

- Log everything

- Test in staging before production

- Collaborate between data scientists and engineers

Conclusion

Deploy ML models is a critical step in turning data science work into real business value. By following these best practices containerization, monitoring, versioning, and automation, you can build systems that are not just intelligent, but also reliable and scalable.

Read More: Text-to-Speech:A simple and Complete AI Voice Guide for 2026

| # | Topic | Key Points | Tools / Platforms |

|---|---|---|---|

| 1 | Deployment Strategies | Batch: Processes data in chunks (reports, analytics)Real-Time: Instant predictions via APIs (chatbots, fraud detection)Edge: Runs on devices (IoT, mobile), reduces latency | — |

| 2 | Containerization | Ensures consistency across environmentsPackage model with dependencies | Docker, Kubernetes |

| 3 | Scalable APIs | Expose models via APIsUse REST/GraphQLEnsure low latency & caching | FastAPI, Flask |

| 4 | Version Control | Track changesRollback modelsEnsure reproducibility | Git, DVC |

| 5 | Monitoring Performance | Track accuracy, latencyDetect data & model drift | Prometheus, Grafana |

| 6 | Data Drift & Retraining | Detect drift automaticallyRetrain periodicallyUse pipelines | Apache Airflow, Kubeflow |

| 7 | Security & Compliance | Encrypt dataAuthentication & authorizationFollow regulations (e.g., GDPR) | — |

| 8 | Performance Optimization | Quantization, pruningUse GPUs/TPUs for speed | NVIDIA, Google |

| 9 | CI/CD Pipelines | Automate deploymentFaster updatesReduce errors | Jenkins, GitHub Actions |

| 10 | Cloud vs On-Premise | Cloud: Scalable, managed servicesOn-Premise: More control, secure data | Amazon Web Services, Microsoft Azure, Google Cloud |

| 11 | Common Challenges | Model driftInfrastructure complexityLatency issuesData inconsistency | — |

| 12 | Pro Tips | Start simpleUse microservicesLog everythingTest before productionCollaborate teams | — |

Frequently Asked Questions (FAQs)

Q: What is Deploy ML, Models?

To begin with, ML model deployment refers to the process of taking a trained machine learning model and integrating it into a production environment. In other words, it allows the model to work with real-world data and generate predictions that users or systems can actually use.

Q: Why is ML model deployment important?

First and foremost, ML model deployment is essential because it transforms theoretical models into practical solutions. Without deployment, even the most accurate model cannot deliver value. Therefore, it plays a key role in turning data insights into actionable outcomes.

Read More: Ultimate Guide to K-Means Clustering Made Simple

Q: What are the best practices for ML model deployment?

Generally speaking, several best practices ensure successful deployment. For instance, using containerization tools like Docker improves consistency across environments. In addition, building scalable APIs, monitoring performance, and handling data drift are equally important. As a result, these practices help maintain reliability and efficiency.

Q: What is the difference between batch and real-time deployment?

On one hand, batch deployment processes data at scheduled intervals, making it suitable for reports and large datasets. On the other hand, real-time deployment provides instant predictions through APIs. Consequently, it is ideal for applications that require immediate responses, such as recommendation systems or fraud detection.

Q: What tools are used for ML model deployment?

When it comes to tools, there are several widely used options. For example, Docker is commonly used for containerization, while Kubernetes helps manage scaling. Furthermore, frameworks like FastAPI are useful for building APIs, and Apache Airflow supports workflow automation. Altogether, these tools simplify deployment processes.

Q: What is MLOps in machine learning?

Essentially, MLOps (Machine Learning Operations) is a set of practices that combines machine learning with DevOps principles. More specifically, it focuses on automating and managing the entire lifecycle of ML models. As a result, it ensures smoother deployment, monitoring, and continuous improvement.

Q: How do you monitor deployed ML models?

After deployment, monitoring becomes crucial. For example, you should track accuracy, latency, and potential data drift. In addition, tools like Prometheus and Grafana can help visualize performance metrics. Therefore, consistent monitoring ensures that the model continues to perform effectively over time.

Q: What is model drift in machine learning?

Over time, real-world data may change, and as a result, the model’s performance can decline. This phenomenon is known as model drift. In simple terms, it occurs when the model no longer aligns with current data patterns, making retraining necessary.

Q: What are common challenges in Deploy ML Models?

Despite its importance, ML deployment comes with several challenges. For instance, issues like data drift, scalability limitations, and infrastructure complexity can arise. Moreover, a lack of proper monitoring can further complicate things. Therefore, addressing these challenges proactively is critical.

Q: Which cloud platforms are best for Deploy ML Models?

Nowadays, many organizations rely on cloud platforms for deployment. For example, Amazon Web Services, Microsoft Azure, and Google Cloud are among the most popular choices. Not only do they offer scalability, but they also provide managed services for easier implementation.

Q: How often should ML models be retrained?

In general, the retraining frequency depends on the use case and data changes. However, it is advisable to retrain models regularly or whenever performance drops significantly. This way, you can ensure the model remains accurate and relevant.

Q: Can ML models be deployed without coding?

Interestingly, some platforms now offer low-code or no-code deployment options. However, while these tools simplify the process, having a basic understanding of deployment concepts is still beneficial. Ultimately, it helps you make better decisions and avoid potential issues.

Read More: PyTorch Basics to Advanced: A Complete Learning Guide 2026

Call to action

- I help with: SEO Blog Writing

- Tech Article Writing

- On page-SEO

- Keyword Research & Optimization

- Site Audit

If you want professional help or project support, contact me now:

zarirahc@gmail.com

Drop an email. ☝ Let’s build and rank your content together!

Author bio:

Zarirah Asif is a creative content writer who loves turning ideas into engaging words. She writes SEO-friendly articles that are easy to read and useful for readers. Her goal is to help brands stand out with quality content. She is always learning and improving her writing skills

Post Comment

You must be logged in to post a comment.