Feature Selection: A Complete Guide for Better AI Models

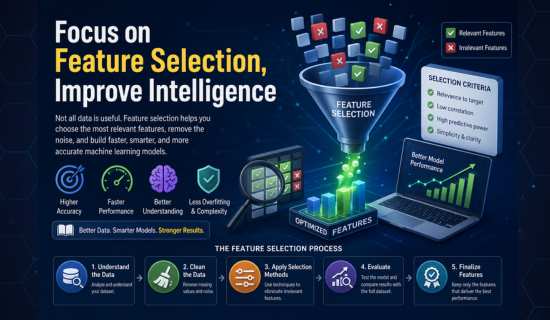

Focus on feature selection, improve intelligence

In machine learning, data acts as the most important element.

However, not all data is useful.

The process of feature selection exists to handle this situation.

The process enables you to select the optimal inputs which will enhance your model performance.

The result of this process leads to a model which operates at higher speed and improved precision.

This guide presents all information which you need to master at each learning stage.

What is Feature Selection?

Feature selection means choosing the most useful features from your dataset.

The selected features will help your model to make accurate predictions.

When building a house price model:

The size of the house has significant importance.

The wall color of the house has no impact on its value.

You need to retain important features while discarding all nonessential elements.

Why Data selection is Important

The first benefit of feature selection results in enhanced accuracy.

Irrelevant data causes confusion for the model which results in its poor performance.

The system provides a method to stop overfitting problems from happening.

The system improves model understanding for all users.

Read More: You Need to Know Smart Text Summarization Secrets Fast

Data selection in AI

AI systems depend on feature selection to achieve their basic functionality.

AI systems often handle large datasets.

Excessive feature numbers create multiple issues.

The increase in features makes the system more complicated while reducing its overall efficiency.

Feature selection enables better outcomes through feature selection.

The criteria of feather selection

Feature selection requires specific techniques for appropriate feature selection.

You must follow proper feature selection criteria.

The following factors need consideration:

Relevance to the target variable

Low correlation with other features

High predictive power

Simplicity and clarity

The performance of machine learning models improves when you select suitable features.

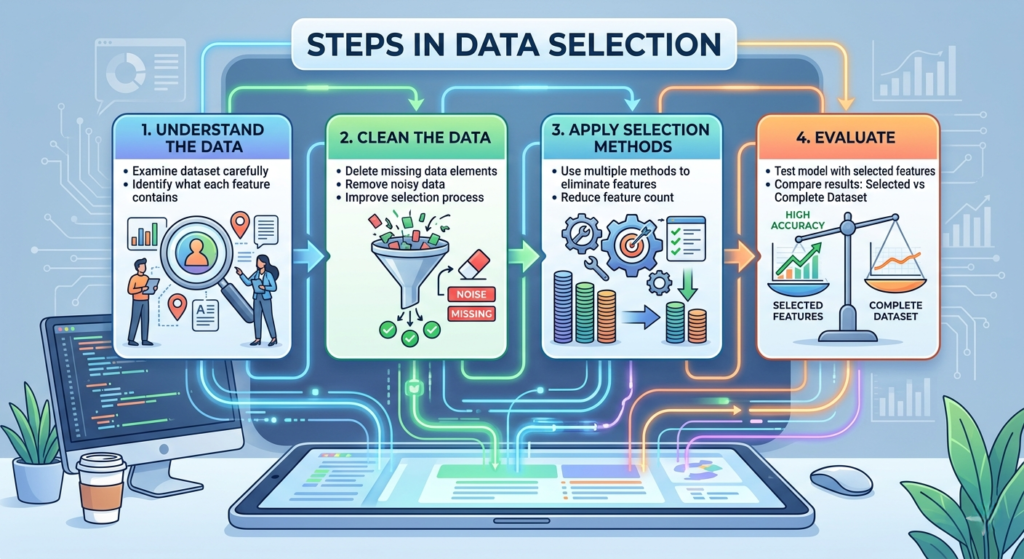

Steps in Data selection

The feature selection process begins with these following steps.

- Understand the Data

You need to examine your dataset through careful analysis.

You must understand what each feature contains.

- Clean the Data

You should delete all missing data elements together with noisy data elements.

The selection process improves through the use of cleaned data.

- Apply Selection Methods

The process requires multiple selection methods to eliminate features from consideration.

- Evaluate

You need to test your model using the specific features which you selected.

The results need comparison which involves using both the chosen features and the complete dataset.

- Finalize Features

You should eliminate all features except for those that demonstrate optimal performance.

| Step | Description |

|---|---|

| Understand the Data | Analyze the dataset carefully and understand what each feature represents. |

| Clean the Data | Remove missing values and noisy data to improve selection quality. |

| Apply Selection Methods | Use different feature selection techniques to eliminate irrelevant features. |

| Evaluate | Test the model using selected features and compare results with the full dataset. |

| Finalize Features | Keep only the features that deliver the best performance. |

Read More: How to Build Authority, Visibility, and Online Income?

Types of Feature picking Methods

The system has three primary feature selection methods.

The three methods operate through different mechanisms.

- Wire

The methods utilize statistical tests to rank features based on their calculated scores. The tests exist which include correlation and chi-square tests as examples.

- Wrapper With

The methods evaluate all possible feature combinations through their testing process.

The system uses model performance results to determine optimal feature combination.

The system provides accurate results but requires additional time to complete its process.

- Embedded Methods

The methods select features during their training period.

The process provides rapid execution while maintaining high performance levels.

Feature picking Tools

Feature selection becomes possible through the assistance of various available software tools.

The system provides four main software systems as its main features.

- Scikit-learn

- XGBoost

- TensorFlow

- Kaggle notebooks

The system streamlines operational tasks through its two main functions.

Feature Selection for XGBoost

XGBoost is a powerful algorithm.

The algorithm utilizes feature importance scores to identify key features.

The system uses feature importance scores to determine which features hold maximum significance.

The process allows you to remove features which received low score evaluation.

The process results in your model achieving both faster operation and cleaner performance.

Feature choosing Random Forest

The Random Forest method has gained popularity as a feature selection technique.

The method uses importance values to establish the ranking of all available features.

Every tree contributes its value toward the final score.

The model calculates the final score through an aggregation of all tree results.

The technique shows optimal performance when applied to extensive database systems.

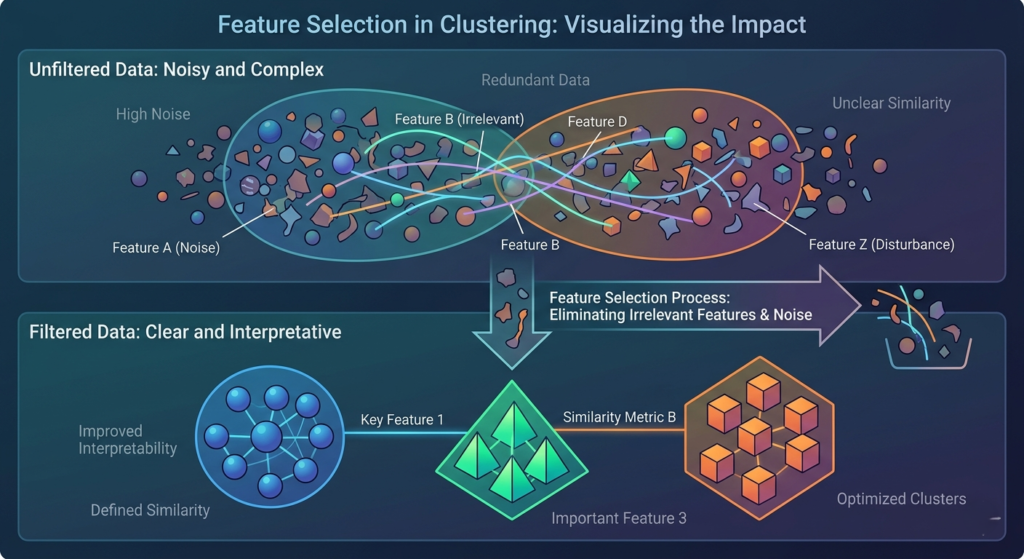

Feature Selection for Clustering

Clustering exists without having a specific targeted variable.

Clustering processes require selection of features which determine the degree of similarity among elements.

You need to remove features which create unnecessary data disturbances.

The process removes features which generate extra noise.

The process results in more understandable clusters.

Key points to understand feature selection in clustering:

- Clustering works without a specific target variable

- Feature selection defines similarity between data points

- Remove irrelevant features that disturb clustering

- Eliminate features that add unnecessary noise

- Improves clarity and interpretability of clusters

Feature Selection Deep Learning

Deep learning models handle large data.

The presence of excessive features creates significant challenges.

The implementation of deep learning techniques for feature selection enables reduction of input requirements.

Neural networks possess the capability to learn patterns through their automated process.

The process benefits from having input data which remains organized.

Feature Selection Genetic Algorithm

Genetic algorithms function by simulating the process of natural selection.

The system evaluates every possible feature combination.

The feature selection system selects the optimal features which it retains.

The system delivers powerful results, yet its operational speed remains slow.

Feature Selection Kaggle Insights

Kaggle users employ feature selection as an essential practice in their work.

The top competitors make heavy use of this feature.

The system tests multiple strategies.

The system combines different methods to create innovative solutions.

The process boosts their model performance through model scoring improvement.

What About Feature Selection Icons?

In UI design, selection icons serve as elements which enable users to filter through data.

They display selected data elements.

The elements do not involve technical aspects but allow users to visualize features.

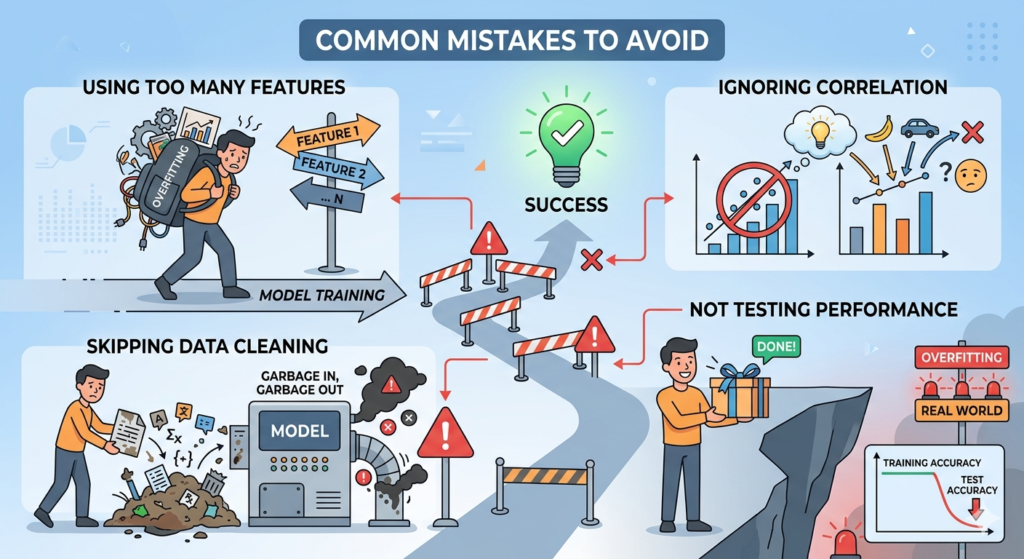

Common Mistakes to Avoid

Many beginners make simple mistakes.

The following choices create problems:

Using too many features

Ignoring correlation

Skipping data cleaning

Not testing performance

You should avoid these errors because they will reduce your chances of success.

Final Thoughts

The technique of feature selection delivers high power results.

The system enhances operational efficiency together with improved precision.

The system enables you to create a less complex version of your model.

The process requires you to begin with basic functions before you can access advanced functions.

The right features determine your operational results in business.

FAQs About Feature Selection

Q: What is feature selection in simple words?

Feature selection means choosing the most useful data for your model. The process selects important data elements which will benefit the model. The method eliminates unnecessary features of the model. The output enables the model to achieve faster results with better accuracy.

Q: Why is feature selection important in machine learning?

Feature selection enhances the performance of machine learning models. The method increases efficiency because it needs less time to complete training. The method decreases the chance of overfitting, which protects model performance. The model develops superior pattern recognition skills because of this enhancement.

Q: What are the main feature selection methods?

There are three main types. The method uses filters which deliver results at high speed with basic implementation. The method provides better results through its detailed process which takes more time to execute.

Q: How does the optimizing feature set work in AI?

AI systems use feature selection to remove all input data which does not match requirements. The process enables the model to concentrate on important information. The result of this process leads to better accurate results.

Q: Can selecting important features improve XGBoost models?

XGBoost performance receives improvement through this process. XGBoost provides feature importance scores. The method enables users to remove features which have minimal effect on performance.

Q: Is Feature choosing needed for deep learning?

Yes, but not always. Deep learning models handle large data. The method requires clean data input which helps improve results.

Q: What is Feature choosing in clustering?

The process eliminates data components which do not hold any importance for the analysis. The method improves clustering results through its enhanced accuracy. The result creates groups which become more understandable through their high clarity.

Q: What is a genetic algorithm in Feature choosing?

The method represents a sophisticated technique. The system evaluates multiple combinations of features. The process determines the most effective features through its evaluation.

Conclusion

Feature selection is critical to machine learning development. The process enables you to concentrate your efforts on essential aspects. The removal of unimportant features leads to better accuracy results.

The model complexity decreases while the accuracy of the system improves. The model achieves faster performance with better understanding capabilities. So, bed

The basic objective remains unchanged when you use either basic filters or advanced techniques.

Feature choosing requires you to select appropriate features for your project. The process requires you to evaluate your model after testing is finished.

The process continues through incremental advancements of your system. Through the process of feature selection intelligent systems acquire better operational capabilities.

Call to action

- I help with: SEO Blog Writing

- Tech Article Writing

- On page-SEO

- Keyword Research & Optimization

- Site Audit

If you want professional help or project support, contact me now:

💌 zarirahc@gmail.com

Drop an email👆 Let’s build and rank your content together!

Author bio:

Zarirah Asif is a creative content writer who loves turning ideas into engaging words. She writes SEO-friendly articles that are easy to read and useful for readers. Her goal is to help brands stand out with quality content. She is always learning and improving her writing skills

Post Comment

You must be logged in to post a comment.