Knowledge Distillation: A Simple Guide for Better AI

Overall, knowledge distillation is a smart way to make AI models smaller. It helps teams keep strong results while using fewer resources. Also, it makes AI easier to run on phones, apps, and websites.

Large AI models can be powerful. However, they often need high cost, strong hardware, and more time. Therefore, many teams use smaller models for daily work.

Read More: “Personal Branding Made Easy: Start Building Your Authority Now”

“A smaller model can still be useful when it learns from a stronger model.”

What Knowledge Distillation Means

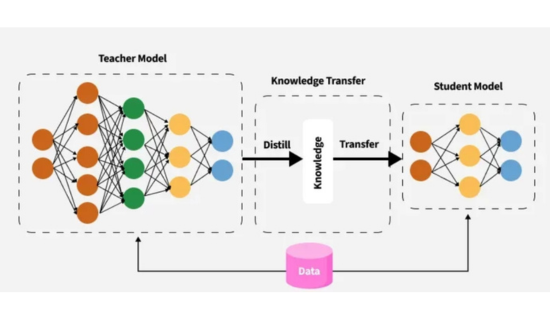

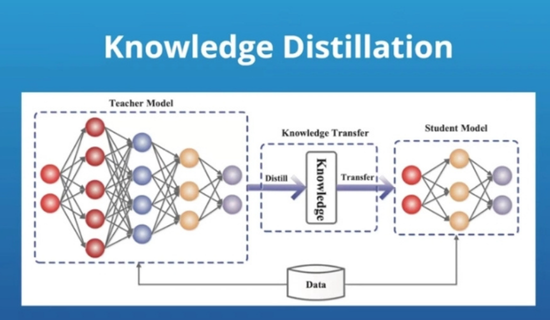

Knowledge distillation uses two models. First, a large model acts as the teacher. Then, a smaller model learns from that teacher.

The teacher gives answers, patterns, and hints. As a result, the student model learns faster. Also, it can perform well with less data.

This method is useful because small models are easier to deploy. Moreover, they often give quicker answers.

Read More: How to Start a Career in AI Policy Jobs Successfully

Why Knowledge Distillation Matters

AI teams need speed, cost control, and good results. However, large models can slow down real products. Therefore, knowledge distillation helps balance quality and speed.

| Feature | Large Model | Small Model |

|---|---|---|

| Speed | Slower | Faster |

| Cost | Higher | Lower |

| Storage | Larger | Smaller |

| Device Use | Harder | Easier |

The table shows the main reason teams use this method. Additionally, smaller models can support real users with less delay.

Read More: OpenCV: The Complete Beginner’s Guide to Computer Vision

“Fast AI feels better to users because it responds without friction.”

How The Process Works

The process starts with a trained teacher model. Next, the teacher predicts answers for training data.

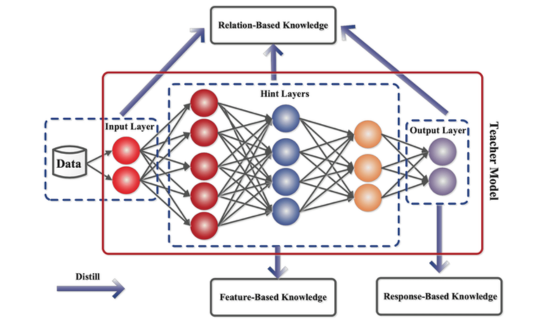

Usually, the student learns soft labels. These labels show more detail than simple right or wrong answers. Because of this, the student learns deeper patterns.

Knowledge distillation can also use hidden features. For example, the student may copy inner model signals. Thus, it learns how the teacher thinks.

Read More: PCA in Python Made Easy: Basic and Easy Guide

Main Benefits

This method gives many clear gains. First, it reduces model size. Also, it cuts running costs.

Second, it improves speed. Therefore, apps can reply faster. Third, it helps AI work on smaller devices.

| Benefit | Why It Helps |

|---|---|

| Lower cost | Uses less compute power |

| Faster replies | Improves user experience |

| Smaller files | Saves storage space |

| Easier launch | Works on more platforms |

These benefits matter for startups and large firms. Moreover, they help teams ship AI features sooner.

Read More: How to Deploy ML Models Efficiently and Safely: An Easy Guide

Common Use Cases

Knowledge distillation is used in chatbots, search tools, and speech apps. It also helps image tools and recommendation systems.

For example, a shopping app may need quick product suggestions. A small model can make this easier. Also, it can reduce server load.

Healthcare tools may also use smaller models. However, these tools need careful testing. Accuracy and safety must come first.

Read More: Feature Selection: A Complete Guide for Better AI Models

“Model size should shrink, but trust should never shrink with it.”

Best Practices for Knowledge Distillation

Start with a strong teacher model. Then, choose a student model that fits your use case. Also, test the student on real tasks.

Use clean data whenever possible. Moreover, compare speed, cost, and quality. This helps you choose the best version.

Do not chase small size alone. Instead, protect useful results. Therefore, knowledge distillation should include testing at each step.

Read More: AI Consulting Explained: Everything You Need to Know

Challenges To Watch

The student model may lose some accuracy. Also, it may copy teacher mistakes. Therefore, teams must review results carefully.

Another issue is poor training data. If the data is weak, the student may learn weak habits. So, clean examples matter a lot.

Sometimes, the teacher model is too complex. In that case, the student may struggle. However, better tuning can help.Read More:

Read More: Best Affordable Gaming Laptops With Stunning Speed, Cooling & RTX Power

Future Of Knowledge Distillation

AI is moving toward faster and cheaper systems. Therefore, knowledge distillation will stay important. It supports useful AI without huge costs.

More teams now need private, local, and mobile AI. Because of that, small models will keep growing. Also, edge devices will benefit.

“The future of AI is not only bigger. It is also smarter, faster, and leaner.”

FAQs

1. What is knowledge distillation?

In simple terms, it is a way to train a small model from a larger model.

2. Why do teams use knowledge distillation?

Moreover, they use it to cut cost, improve speed, and save storage.

3. Is a small model always less accurate?

Not always. However, it depends on training quality and testing.

4. Can knowledge distillation work for chatbots?

Yes, it can help chatbots answer faster and, at the same time, with lower costs.

5. Does knowledge distillation need a teacher model?

Yes, the teacher model guides the smaller student model.

6. Is this method useful for mobile apps?

Yes, smaller models can run better on limited devices.

Read More: Machine Learning by Example: A Simple Guide for Beginners

7. Can the student model copy teacher errors?

Yes, so teams should test outputs with care.

8. Is knowledge distillation only for deep learning?

Additionally, it is mostly used with deep learning models.

9. Does this reduce cloud costs?

Yes, smaller models often need less compute power.

10. Who should use knowledge distillation?

In fact, AI teams, app builders, and product teams can use it.

Read More: Discover People Fast with Powerful Face Recognition Tool

Call To Action

Need clear and SEO-friendly content for your tech brand? I can help with:

- Tech Article Writing

- On page SEO

- Keyword Research & Optimization

- Site Audit

Contact me today:

Email: zarirahwriter@gmail.com

What’s app: +92 371 4778412

Author Bio

Zarirah Asif is a creative content writer who loves turning ideas into engaging words. Additionally, she writes SEO-friendly articles that are easy to read and useful for readers. Moreover, her goal is to help brands stand out with quality content. Furthermore, she is always learning and improving her writing skills.

Post Comment

You must be logged in to post a comment.